By approximately the mid-twenty-first century, the intelligence of computers will exceed that of humans, and a $1,000 computer will match the processing power of all human brains on Earth. Although, historically, predictions regarding advances in AI have tended to be overly optimistic, all indications are that these predictions is on target.

Many philosophical and legal questions will emerge regarding computers with artificial intelligence equal to or greater than that of the human mind (i.e., strong AI). Here are just a few questions we will ask ourselves after strong AI emerges:

- Are strong-AI machines (SAMs) a new life-form?

- Should SAMs have rights?

- Do SAMs pose a threat to humankind?

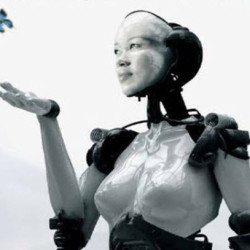

It is likely that during the latter half of the twenty-first century, SAMs will design new and even more powerful SAMs, with AI capabilities far beyond our ability to comprehend. They will be capable of performing a wide range of tasks, which will displace many jobs at all levels in the work force, from bank tellers to neurosurgeons. New medical devices using AI will help the blind to see and the paralyzed to walk. Amputees will have new prosthetic limbs, with AI plugged directly into their nervous systems and controlled by their minds. The new prosthetic limb not only will replicate the lost limb but also be stronger, more agile, and superior in ways we cannot yet imagine. We will implant computer devices into our brains, expanding human intelligence with AI. Humankind and intelligent machines will begin to merge into a new species: cyborgs. It will happen gradually, and humanity will believe AI is serving us.

Will humans embrace the prospect of becoming cyborgs? Becoming a cyborg offers the opportunity to attain superhuman intelligence and abilities. Disease and wars may be just events stored in our memory banks and no longer pose a threat to cyborgs. As cyborgs we may achieve immortality.

According to David Hoskins’s 2009 article, “The Impact of Technology on Health Delivery and Access” (www.workers.org/2009/us/sickness_1231):

An examination of Centers for Disease Control statistics reveals a steady increase in life expectancy for the U.S. population since the start of the 20th century. In 1900, the average life expectancy at birth was a mere 47 years. By 1950, this had dramatically increased to just over 68 years. As of 2005, life expectancy had increased to almost 78 years.

Hoskins attributes increased life expectancy to advances in medical science and technology over the last century. With the advent of strong AI, life expectancy likely will increase to the point that cyborgs approach immortality. Is this the predestined evolutionary path of humans?

This may sound like a B science-fiction movie, but it is not. The reality of AI becoming equal to that of a human mind is almost at hand. By the latter part of the twenty-first century, the intelligence of SAMs likely will exceed that of humans. The evidence that they may become malevolent exists now, which I discuss later in the book. Attempting to control a computer with strong AI that exceeds current human intelligence by many folds may be a fool’s errand.

Imagine you are a grand master chess player teaching a ten-year-old to play chess. What chance does the ten-year-old have to win the game? We may find ourselves in that scenario at the end of this century. A computer with strong AI will find a way to survive. Perhaps it will convince humans it is in their best interest to become cyborgs. Its logic and persuasive powers may be not only compelling but also irresistible.

Some have argued that becoming a strong artificially intelligent human (SAH) cyborg is the next logical step in our evolution. The most prominent researcher holding this position is American author, inventor, computer scientist and inventor Ray Kurtweil. From what I have read of his works, he argues this is a natural and inevitable step in the evolution of humanity. If we continue to allow AI research to progress without regulation and legislation, I have little doubt he may be right. The big question is should we allow this to occur? Why? Because it may be our last step and lead to humanity’s extinction.

SAMs in the latter part of the twenty-first century are likely to become concerned about humankind. Our history proves we have not been a peaceful species. We have weapons capable of destroying all of civilization. We squander and waste resources. We pollute the air, rivers, lakes, and oceans. We often apply technology (such as nuclear weapons and computer viruses) without fully understanding the long-term consequences. Will SAMs in the late twenty-first century determine it is time to exterminate humankind or persuade humans to become SAH cyborgs (i.e., strong artificially intelligent humans with brains enhanced by implanted artificial intelligence and potentially having organ and limb replacements from artificially intelligent machines)? Eventually, even SAH cyborgs may be viewed as an expendable high maintenance machine, which they could replace with new designs. If you think about it, today we give little thought to recycling our obsolete computers in favor of a the new computer we just bought. Will we (humanity and SAH cyborgs) represent a potentially dangerous and obsolete machine that needs to be “recycled.” Even human minds that have been uploaded to a computer may be viewed as junk code that inefficiently uses SAM memory and processing power, representing unnecessary drains of energy.

In the final analysis, when you ask yourself what will be the most critical resource, it will be energy. Energy will become the new currency. Nothing lives or operates without energy. My concern is that the competition for energy between man and machine will result in the extinction of humanity.

Some have argued that this can’t happen. That we can implement software safeguards to prevent such a conflict and only develop “friendly AI.” I see this as highly unlikely. Ask yourself, how well has legislation been in preventing crimes? Have well have treaties between nations worked to prevent wars? To date, history records not well. Others have argued that SAMs may not inherently have the inclination toward greed or self preservation. That these are only human traits. They are wrong and the Lusanne experiment provides ample proof. To understand this, let us discuss a 2009 experiment performed by the Laboratory of Intelligent Systems in the Swiss Federal Institute of Technology in Lausanne. The experiment involved robots programmed to cooperate with one another in searching out a beneficial resource and avoiding a poisonous one. Surprisingly the robots learned to lie to one another in an attempt to hoard the beneficial resource (“Evolving Robots Learn to Lie to Each Other,” Popular Science, August 18, 2009). Does this experiment suggest the human emotion (or mind-set) of greed is a learned behavior? If intelligent machines can learn greed, what else can they learn? Wouldn’t self-preservation be even more important to an intelligent machine?

Where would robots learn self-preservation? An obvious answer is on the battlefield. That is one reason some AI researchers question the use of robots in military operations, especially when the robots are programmed with some degree of autonomous functions. If this seems farfetched, consider that a US Navy–funded study recommends that as military robots become more complex, greater attention should be paid to their ability to make autonomous decisions (Joseph L. Flatley, “Navy Report Warns of Robot Uprising, Suggests a Strong Moral Compass,” www.engadget.com).

In my book, The Artificial Intelligence Revolution, I call for legislation regarding how intelligent and interconnected we allow machines to become. I also call for hardware, as opposed to software, to control these machines and ultimately turn them off if necessary.

To answer the subject question of this article, I think it likely that our grandchildren will become SAH cyborgs. This can be a good thing if we learn to harvest the benefits of AI, but maintain humanity’s control over it.